We had a short outage in production this week. Exciting! The system recovered, no data was lost, and we learned a bit about our environment. Maybe.

Se Yeon checked the website, and found that it was unresponsive. The health checkpoints were not responding, and Kubernetes was shutting down app nodes until there wasn’t anything left. Aaron scaled them up, and the system recovered. But why did it go down?

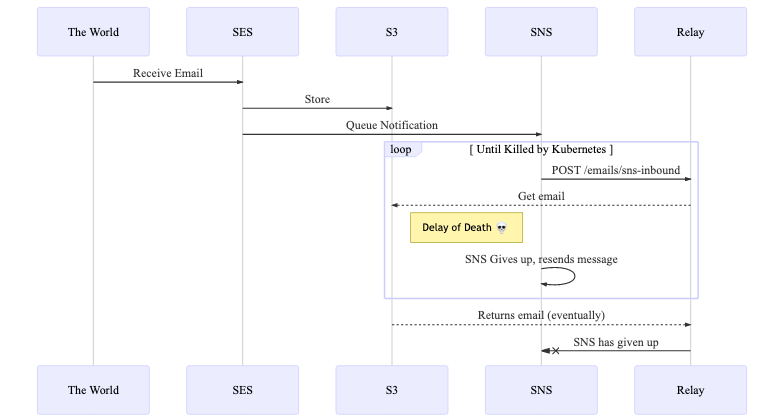

We use several Amazon services for Relay. The Simple Email Service (SES) receives emails from the world, and stores them in the Simple Storage Service (S3). The Simple Notification Service (SNS) tells the Relay app about the new message, by calling the /emails/sns-inbound endpoint. To process the request, the Relay app then fetches the email from S3, analyzes it, forwards an email using SES, and then tells SNS that it is done. If there’s an error, the SNS will retry again, over and over, for an hour. It then sticks the email in another bucket, handled by a different process.

My best guess is that reading from S3 was slower than usual, taking longer than SNS was willing to wait. While Relay continued to wait on S3, SNS gave up, which is treated like an error, and tried calling /emails/sns-inbound again. This repeat request was also slow, and tied up another Relay inbound connection. Soon, the Relay app was doing nothing but waiting on S3, and there was no more connection capacity, including for the health check. With health checks not responding, Kubernetes did what we told it – terminated the hung apps and started new ones. Maybe it helped, or maybe they proceeded to fill up all the connections and wait on S3.

The good news is that the system did not lose data. SNS churned for a bit, but when the issue resolved itself, it retried the messages and they were successfully processed.

This did expose a few issues. An S3 error handler had an error itself, causing more issues. The default S3 client automatically retries (a “legacy retry mode” with exponential backoff). We switched to the “standard retry mode” and turned off retries for the S3 client, since we want to fail quick and let SNS retry (PR 1567). We also tuned our alerting to tell us things are bad before our users do.

There are some long-term issues as well. I’m guessing at the cause of the incident, based on timing data for the /emails/sns-inbound endpoint, the S3 client configuration, and some previous experience. However, we don’t have detailed metrics on external API calls, much less trends leading up to the incident, to say for sure what happened. We could use some better telemetry and automated reporting. We’re doing a lot of work in an endpoint, and instead we could capture the data and process it in a different job, but that could just move the issue to somewhere less visible. I prefer to add metrics, measure, and then adjust the infrastructure, but I think implementing more scalable infrastructure first is valid as well.

This is pretty typical for a project that glues together third-party services. It is hard to tell what data you’ll get and how fast it will come. Some issues don’t appear until you’re at production load, or at 2x growth, or 10x growth. I’m pretty happy with the scope of this incident, and that the stream is still a trickle and not a flood.

I had Monday off, but the kids had a whole week without school. We also had a winter storm, starting with hail that resembled Sonic ice cubes, and would have shut down school if it was in session. Everything around me was screaming “Winter Break!”, and it was cold in my basement office. I didn’t take time off, because it was a short work week anyway, but made sure to spend some evenings with the kids and in front of the fireplace.

The dogs didn’t get a lot of walks due to the winter storm. My wife and I took them to a local park, to train the puppy and get the older dogs some exercise. The ground alternated between mud and ice, and the dogs were ungovernable. Very little training was done, Finn cracked the ice and swam a bit, and many squirrels were threatened. February is (hopefully!) the end of winter weather, and very much overstays its welcome.

Some recommendations:

- Don’t commit to doing something weekly, unless you really enjoy it. Days late with this one!

- I’m enjoying Babylon 5 on HBO Max. I watched it back in the 90’s, but missed the last seasons in college. I think they remastered the CGI, but I don’t watch it for the effects. The characters are excellent, and they slowly tease out the “long plot” over several episodes. The aliens steal the show – Peter Jurasik’s Londo, Andreas Katsula’s G’Kar, and Bill Mumy’s Lennier are fun characters, and well acted. The action is slow enough that I can fold laundry and still catch what’s happening.

Tags: weekly log

Leave a comment